In my last post, I walked through the four-phase framework I use when helping companies evaluate and implement AI: Strategic Framing, Feasibility & Readiness, Solution Architecture, and Implementation & Operationalization. Each phase ends with a decision gate, that is, a checkpoint that forces you to justify moving forward before committing more time and money.

If you’ve been following this series, let’s assume you’ve done the hard work. You’ve defined the problem in business terms and confirmed it’s strategically important (Decision Gate 1). You’ve mapped the workflow, assessed your readiness, and addressed any gaps (Decision Gate 2). Now you’re at Decision Gate 3: does the expected benefit exceed the cost and risk?

To answer that question, you need to identify realistic solutions, understand the tools and infrastructure involved, and scope a pilot that will tell you whether the approach actually works. That’s what this post is about.

Identifying the Right Solution

As I outlined in my last post, the solution options range from business process re-engineering (no technology at all) through off-the-shelf tools, automation platforms, autonomous agents, and custom applications. The right question is always: what is the lowest complexity solution that achieves the outcome?

Before reaching for any technology, it’s always worth taking a hard look at the existing process. Over time, processes become less efficient rather than more, especially as they pass from one person to the next. People stop understanding why the process works the way it does and only know what they’re supposed to do, which means opportunities to improve it are missed. The business context changes too: conditions shift, new tools become available, and the process doesn’t keep up. Sometimes the biggest win is re-engineering the process itself, and only then layering technology on top.

When technology is warranted, the choices map to the categories I’ve covered in earlier posts:

- Automation platforms (Post 8) are often underestimated. They are effective for prototyping, testing workflows, and generating value quickly, even if the platform itself may not be suitable for scaling into a production-ready system (Post 9).

- Autonomous agents (Post 10) are powerful but, in my view, not ready for most use cases. That’s going to change as security and governance frameworks mature around them, but today the risk profile is too high for most small and mid-sized businesses.

- Custom applications (Post 12) come in two forms that matter for pilot planning:

- Standalone: the application receives inputs from other business systems (including email or files), processes data independently, and sends results back. This is the right fit when you’re working with off-the-shelf software that you have limited ability to customize.

- Integrated: the application is built directly into your existing business systems. This is the right fit when your business runs on custom software that you have the ability to extend.

The standalone vs. integrated distinction matters because it changes the pilot’s scope, the skills required, and the integration risk.

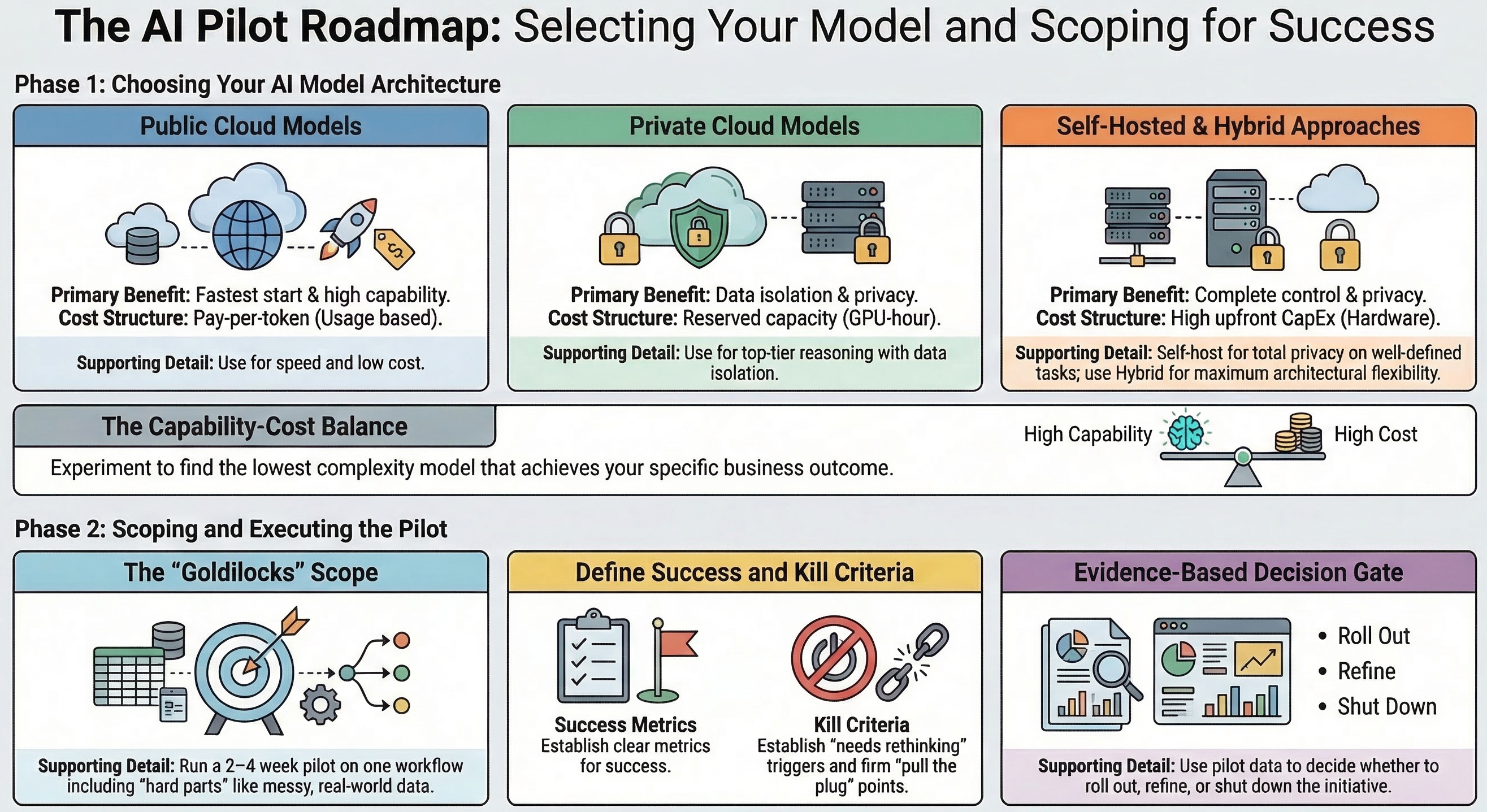

Choosing an AI Model

If your solution is going to use AI, a big decision is how you’re going to access the model that does the “thinking.” This is not a one-size-fits-all choice, and getting it wrong can make or break a pilot. A model that isn’t capable enough will produce unsatisfactory results, and one that’s too capable (yes, that’s a real thing) can make the solution’s costs untenable.

There are several dimensions to consider: where the model needs to live, data privacy requirements, the capabilities you need (reasoning, image recognition, text-to-speech, code generation, and so on), usage costs, and the performance your use case demands.

I see four practical options:

Public AI model from a cloud vendor (think OpenAI, Anthropic, Groq, and many others). This gives you access to a wide range of models, including the most capable ones available today. You pay per token, which keeps upfront costs low. The trade-off is that your data leaves your systems, and if you’re in Canada, it almost certainly leaves the country. Nevertheless, for non-sensitive workloads, this is often the fastest and most cost-effective starting point.

Private AI model from a cloud vendor (Amazon Web Services, Microsoft Azure, Google Cloud Platform, and others). These can be very high performing, often offering the same frontier models available publicly, but in a private deployment where your data stays isolated. You typically pay for reserved capacity (e.g., per GPU-hour) rather than per token, which makes costs more predictable but generally higher. This is the right choice when you need top-tier capability with genuine data privacy.

Self-hosted private AI model. You provide the hardware and run open-source models on it. The upfront capital expenditure can be significant, and you almost certainly won’t be running the latest or most capable models because the hardware requirements for frontier models are beyond what most businesses can justify. But you get full data privacy, no ongoing usage costs beyond maintaining your infrastructure, and complete control. For specific, well-defined tasks where a smaller model performs adequately, this can be very economical at scale.

Hybrid approach. In practice, many organizations will end up here. Use a local or self-hosted model for simpler tasks that a less capable model can handle, use a public cloud model when you need high capability without data privacy concerns, and use a private cloud model when you need both capability and data protection. This is the most flexible option, but it adds architectural complexity and requires clear policies about which data goes where.

Choosing the right model (or combination of models) will almost certainly require experimentation. Plan for it! Budget time and money to try different options and benchmark them against your specific use case. The goal is to find the best combination of security, performance, and cost, not to pick the model with the most impressive benchmarks.

Decision Gate 3: Does Expected Benefit Exceed Cost and Risk?

At this point, you should have two or three solution scenarios, each with estimates for upfront cost, ongoing cost, implementation timeline, risk exposure, and governance implications. You should also have a clear picture of the AI model infrastructure required and its cost profile.

Now is the time to make a disciplined decision. Does the expected benefit (measured against the problem statement and success criteria you defined back in Phase 1) justify the investment and the risk? If the answer is yes, you’re ready to pilot. If not, this is the right place to stop, revisit, or shelve the initiative. There is no shame in stopping here; in fact, the discipline to stop is what separates a well-run evaluation from an expensive mistake.

Scoping the Pilot

Now, and only now, you are ready to start a pilot. Scoping it is a balancing act, and it’s easy to get wrong in both directions.

Too small and the pilot doesn’t test the assumptions that actually matter. If you scope it so narrowly that it avoids the hard parts (the messy data, the edge cases, the integration points) you’ll get a clean result that tells you nothing about whether the solution will work in production.

Too large and you’ve effectively skipped the pilot phase entirely. You’re spending production-level time and money without having validated the core assumptions first.

A well-scoped pilot has a few key characteristics:

- It focuses on one department or one workflow. Pick one area where the problem is most acute and where you have the best data and the most cooperative stakeholders.

- It includes the hard parts. If your solution depends on data quality, the pilot needs to use real data, not a curated sample. If it depends on user adoption, real users need to be involved. The purpose of a pilot is to surface problems early, not to prove a point in ideal conditions.

- It has a defined timeline. Two to four weeks is typical for smaller companies. This is long enough to encounter real-world variability, and short enough to maintain urgency and avoid scope creep.

- It instruments metrics from day one. Don’t wait until the end to figure out if it worked. Define what you’re measuring before you start and collect data continuously. This isn’t just about the outcome; it’s about understanding why the outcome is what it is.

- It has clear ownership. One executive sponsor, a small, defined team, and clear escalation paths. If the pilot doesn’t have a named owner taking responsibility for it, it will drift.

Defining Success — and Failure

Before the pilot begins, you need to answer three questions:

What does success look like? Tie it back to the measurable baseline you established in Phase 1. If the problem was “proposal turnaround time is 5 days,” then success might be “reduce to 2 days with equivalent or better quality.” Be specific. “It seems to be working well” is not a success metric.

What does “needs rethinking” look like? Not every pilot that falls short of its targets is a failure. Maybe the approach is sound but the scope was wrong, or the data needs more preparation, or the model needs tuning. Define upfront what would lead you to adjust and re-run rather than abandon.

What does failure look like? This is the question most companies skip. Define the conditions under which you pull the plug. This could be the costs exceeding a threshold, output quality below a minimum, or adoption levels so low the data isn’t meaningful. These are your kill criteria, and they are a necessary discipline. Without them, struggling pilots linger indefinitely, consuming resources and attention.

Beyond these three questions, there are some common pitfalls I see in pilots that are worth watching for:

- Scope creep. “While we’re at it, let’s also…” is the most dangerous phrase in a pilot.

- Declaring success too early. A few impressive outputs don’t constitute a validated solution. Wait for the full timeline and the full dataset.

- Measuring the wrong thing. Activity metrics (how many times the tool was used) are not outcome metrics (did it actually improve the process).

- Losing the champion. If the executive sponsor moves on or loses interest mid-pilot, the initiative is at serious risk. Plan for continuity.

From Pilot to Decision

The pilot isn’t the end – it’s the evidence-gathering phase that feeds your ongoing evaluation. This connects directly to what I described in my last post as Decision Gate 4: is this delivering measurable business impact?

A well-run pilot gives you the data to answer that question honestly. It tells you whether to proceed to a broader rollout, refine the approach and re-pilot, or shut it down. All three are valid outcomes, and each one represents a return on the pilot investment. Even a “no” answer prevents a much larger waste of resources down the road.

The important thing is that the decision is based on evidence rather than enthusiasm.

Wrap Up

Next week, I’ll walk through a concrete scenario using an actual business problem to make this whole process tangible, from problem definition through pilot execution and evaluation.

This post is part of a series on the current state of AI, focused on how it can be applied in practical ways to deliver measurable improvements in productivity, cost savings, and response times. If you’d like to explore more, all previous posts are available under Insights; please read them and reach out with any questions or comments you have.