In my last post, I discussed the five structural reasons AI initiatives fail. The pattern is consistent: most failures stem from strategic, architectural, or operational errors, not the technology itself.

So how do you avoid those failures? In this post, I’ll walk through the framework I use when helping companies evaluate and implement AI. It’s the same structured approach whether the engagement involves AI or not, because the fundamentals of a successful initiative don’t change just because AI is part of the solution.

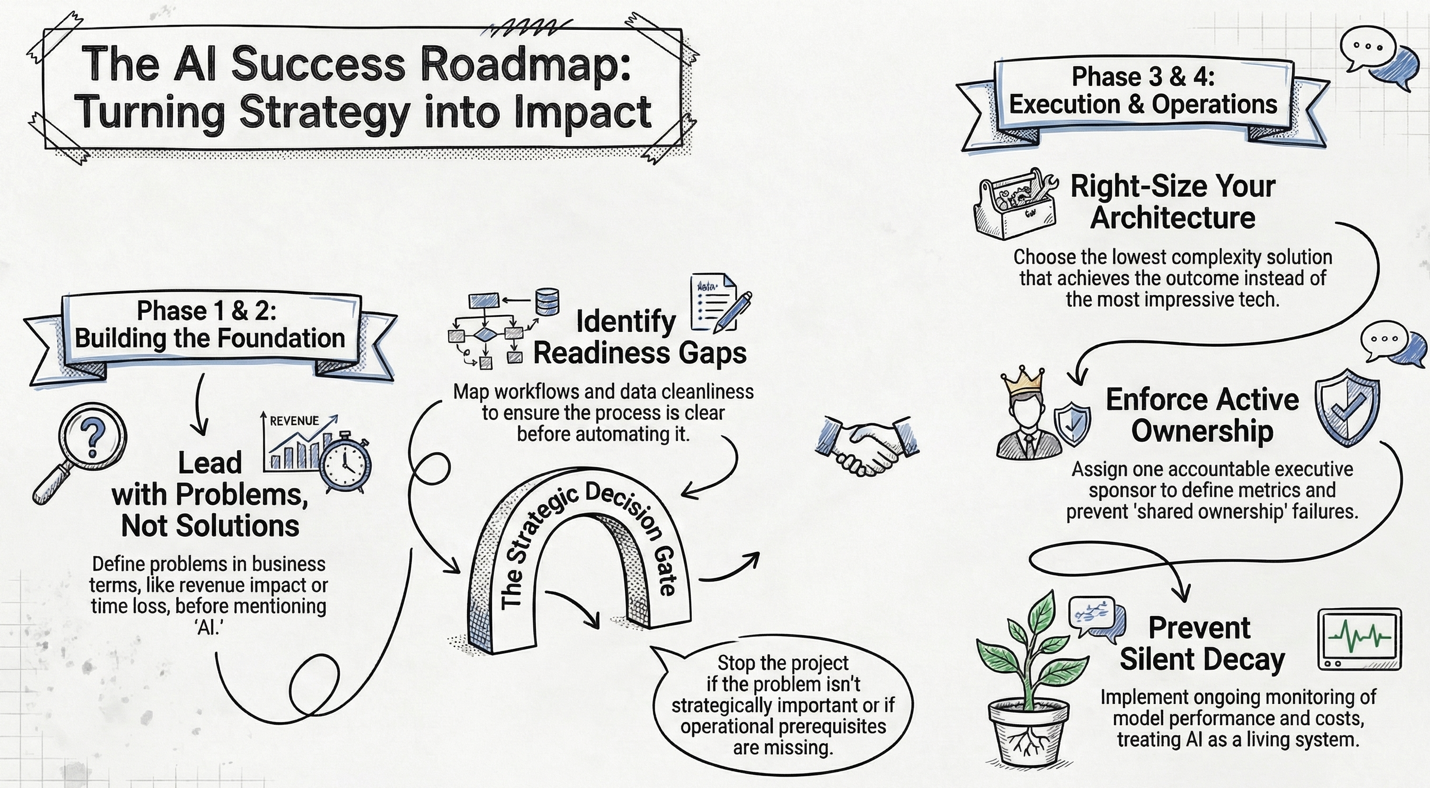

Phase 1 — Strategic Framing

Should we even be doing this?

The single most common mistake I see is companies starting with a solution instead of a problem. “We need AI” is not a problem statement. “We lose 18% of inbound leads due to slow follow-up” is.

This phase is about defining the problem in business terms – quantifying cost, time, risk, and revenue impact – and then prioritizing it honestly against other business constraints. For smaller companies in particular, even a genuine opportunity needs to compete against the reality of limited bandwidth and resources.

When I run an AI Discovery Day engagement, this is where the morning starts: interviewing leadership, walking through key processes, and understanding the technology and data landscape. The goal is to understand your world before anyone says the word “AI.”

Decision Gate: Is this problem strategically important right now? Many initiatives should stop here.

Phase 2 — Feasibility & Readiness

Can we realistically solve this?

Once a problem is worth solving, the next step is mapping the current workflow in detail. Who touches it? What systems are involved? Where do exceptions live? Where does data come from, and how clean is it?

You’d be surprised how often companies skip this. They jump from “we have a problem” to “let’s buy a tool” without understanding the process they’re trying to improve. If the process isn’t clear to the people running it, AI will not make it clearer.

This is also where you identify readiness gaps: no clean data, no process owner, no internal technical skill, no monitoring capability, no governance policy. You don’t just identify solutions, but rather you identify missing prerequisites. In my AI Discovery Day process, this maps to the Opportunity Mapping phase, where the team collaboratively identifies and prioritizes opportunities using a structured framework.

Decision Gate: Are we operationally ready to attempt this? If not, solve readiness first.

Phase 3 — Solution Architecture

What category of solution fits?

This is where earlier posts in the series come together. The solution options range from process redesign (no tech at all) through off-the-shelf tools, automation platforms, autonomous agents, and custom development. The right question is: what is the lowest complexity solution that achieves the outcome? Not: What is the most impressive?

For each viable option, develop two or three scenarios estimating upfront cost, ongoing cost, implementation timeline, risk exposure, scalability, and governance implications. This forces discipline and avoids the “we just liked the demo” trap.

Decision Gate: Does expected benefit exceed cost and risk?

Phase 4 — Implementation & Operationalization

This is where most failures happen.

Implementation requires three things that smaller companies routinely underestimate: ownership, controlled scope, and ongoing monitoring.

Ownership means one accountable executive sponsor – not IT ownership, not “shared ownership.” That person defines success metrics, a monitoring plan, a review cadence, and an escalation path.

Controlled scope means piloting in one department, limiting scope, and instrumenting metrics from day one. No “big bang” rollout.

Ongoing monitoring means reviewing output quality, tracking costs, evaluating model performance, and logging edge cases. As I discussed in my previous post, AI is closer to a living system than any other technology you use. Without monitoring, performance degrades silently.

Decision Gate (Ongoing): Is this delivering measurable business impact? If not, refine or shut it down.

How This Maps to the Discovery Day

The framework above is exactly what drives my AI Discovery Day engagements. In a single day on-site, we work through the Discovery phase (strategic framing and process understanding), a tailored Education session (so your team understands the tools relevant to your business), a collaborative Opportunity Mapping workshop (feasibility, readiness, and solution options), and a same-day Readout of preliminary findings across People, Processes, Data, and Technology.

Within a week, you receive a written AI Roadmap Report with prioritized recommendations, a readiness assessment, and an evaluation framework – everything you need to move forward with confidence, whether you continue working with us or not.

You can read more about the AI Discovery Day in the Services section of this website.

Wrap Up

In my next post, I’ll shift from strategy to execution and look at what happens when you actually start building, that is, the practical considerations of running an AI pilot.

This post is part of a series on the current state of AI, focused on how it can be applied in practical ways to deliver measurable improvements in productivity, cost savings, and response times. If you’d like to explore more, all previous posts are available under Insights; please read them and reach out with any questions or comments you have.